For the past few days I've been working on installing on some. This was a fresh install in a brand new environment, so I didn't have to worry much about interrupting current production as I'd just set up the servers , storage , and software (vSphere 5.1) alongside the current environment and then migrate the physical machines to VMs later. Cisco UCS servers have been building a name for themselves in data centers in the past couple of years. Many people think they only have the blades to offer, but the UCS C-Series are actually rackmount servers that offer many of the same advantages as blades. The UCS C-Series rackmount servers come at a more SMB-friendly price than the blades (which are known as the B-Series). The C-Series provides management using the Cisco UCS Integrated Management Controller (CIMC) as shown in Figure A.

So, all that needs to be done is to power up your UCS, give it an IP address, and then connect to the CIMC over the network. Figure A Click the image to enlarge. If you're going to load on these servers, there are a few things you need to consider when ordering them. For instance, you cannot boot from ESXi using a software RAID controller. So, even if you have some local/internal hard drives and even if you can install the ESXi OS on those hard drives, you will not be able to boot from them if you have the software RAID controller. 'VMware ESX/ESXi or any other virtualized environments are not supported for use with the embedded MegaRAID controller.

Hypervisors such as Hyper-V, Xen, or KVM are also not supported for use with the embedded MegaRAID controller' (source: ). If you're going to use a SAN and you don't even need the internal hard drives, I recommend using what Cisco calls the Flexible Flash Card. This card is basically an SD card that comes pre-configured with four virtual drives. The first drive is the Cisco UCS Server Configuration Utility, the second is the HV or Hypervisor, the third contains the Cisco Drivers, and the fourth is the Cisco Host Upgrade Utility.

The HV drive is where you can install your ESXi server and can be made bootable from within the CIMC. This is much easier than worrying about which kind of RAID controller to get, and I believe it is a little less expensive than getting local drives with a hardware RAID controller.

After you order the proper configuration for what you're trying to do, the rest falls into place pretty easily. Here is a high level overview of the steps to follow from racking to booting ESXi. To configure the CIMC:. Install the Flexible Flash Card in the server.

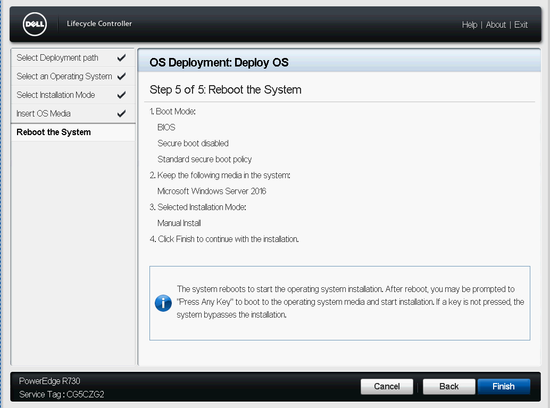

Rack the UCS server and plug a network cable into the management port. Power it on. Press F8 to configure the CIMC information (IP address, password, etc.). From a computer on the same network, open a browser and connect to the CIMC using the IP address assigned in step 3. Go to Servers Inventor Storage to see the Flex Flash Storage Adapters. Click Configure Operational Profile and then put a check next to the virtual drives you'd like to enable (i.e., the HV drive). To install ESXi.

Click Launch The KVM from the CIMC. In the KVM window, click Virtual Media. Click Add and browse to where you've downloaded your Cisco Custom ESXi.iso file and then put a checkmark next to it so it's mapped as the Virtual CD/DVD.

Click back to the Monitor tab and then click Macros. Select the Ctrl-Alt-Del macro to reboot the server. Press F6 as the server is rebooting to change the boot device to the Virtual CD/DVD.

Install ESXi as you normally would, but make sure to install it to the HV drive (not to the local drives). When the install is complete, unmap the Virtual CD/DVD and reboot the server using the macro again. Press F6 to change the boot device to the HV drive, and it should now boot to the familiar yellow and gray ESXi screen. This is a lot of information to take in, especially if you're new to the UCS world. I highly recommend doing some additional reading.

To find out more about RAID controllers, please. To find out more about the UCS C220 M3,. There's also plenty out there if you do a search on UCS or unified computing in general. This post is meant to be a high level overview of things to consider in this specific configuration. Related Topics. Lauren Malhoit has been in the IT field for over 10 years and has acquired several data center certifications. She's currently a Technology Evangelist for Cisco focusing on ACI and Nexus 9000. She has been writing for a few years for TechRepublic, Tech Pro Research, and VirtualizationAdmin.com.

As a Cisco Champion, EMC Elect, VMware vExpert, and PernixPro, Lauren stays involved in the IT community. Lauren has been a delegate for Tech Field Day and has also authored a book called VMware vCenter Operations Manager Essentials.

In our previous post on how to, we discussed how to upgrade your Avago/LSI SMIS provider that allows you to interact with your Avago/LSI card with the Avago Storage Manager utility. However, may not have installed the Avago/LSI SMIS provider on your VMware ESXi host and you may need visibility into a RAID operation or to silence the alarm on your VMware ESXi host running an Avago/LSI RAID controller. What are your options here?

Do you have to schedule a maintenance period to shutdown your VMs (if standalone host, lab environment, etc), install the vib, and reboot? Thankfully, there is another utility that can come in handy in these cases when you need to interact with your Avago/LSI RAID controller and you can’t schedule a maintenance period. For these situations, we can use Avago LSI StorCLI, so let’s look at Installing Avago LSI VMware ESXi. Installing Avago LSI StorCLI VMware ESXi Similar to installing the Avago/LSI SMIS provider, the process to install the StorCLI is straightforward and simply includes copying the.vib file to the ESXi host and then installing using the esxcli software vib install -v command pointed to the.vib.

The nice difference in installing StorCLI from installing the SMIS provider is that the StorCLI does not require a reboot. After installation, we need to change our working directory to /opt/lsi/storcli where we can then run the storcli command. Below we are running the storecli show command. Silence Alarm Avago LSI StorCLI. I like the tools that are provided by Avago/LSI to interact with the RAID controllers and affect change to the controller while booted into an operating system.

Intel Raid Controller Software

The SMIS provider is a great way to remotely monitor an Avago/LSI system remotely. If you don’t have the luxury of a maintenance period to reboot a host, the StorCLI is a nice alternative to using the Storage Manager application.

The StorCLI is all command line driven, however, has most if not all of the functionality of the Storage Manager application. Installing Avago LSI StorCLI VMware ESXi can provide a great alternative to having a maintenance period and needing a reboot to interact with the Avago/LSI RAID controller.

VMware ESX software delivers high performance I/O for PCI based SCSI, RAID, Fibre Channel, and Ethernet controllers. To achieve high performance, these devices are accessed directly through device drivers in the ESX host, and not through a host operating system, as with VMware Workstation and VMware Server products. Support for I/O devices listed in this document is specific to device compatibility with the VMware ESX versions listed in the corresponding tables. This does not imply a statement of support for adding a device to any certified system. System vendors determine device compatibility with a given system. Please refer to the compatibility list provided by the system vendor before adding a certified device to a certified system. NOTE: IDE RAID and SATA RAID are not supported for the VMFS file system.

NOTE: You cannot simultaneously run devices using the mptscsi driver and ones using the mptscsi2xx driver. NOTE: You cannot simultaneously run devices using the aacraid driver and ones using the aacraidesx30 driver.

Dell Raid Controller Software

NOTE: You cannot simultaneously run devices using the megaraid2 driver and ones using the megaraidsas driver. NOTE: SAS 2.0 controllers are supported only with VMware ESX/ESXi 4.0u1 and newer releases. Dual speed(6Gb/s and 3Gb/s) SAS controllers are only supported at 3Gb/s speed with vSphere 4.0 and earlier releases. NOTE: ESX 4.0, ESXi 4.0 Embedded and ESXi 4.0 Installable are equivalent products from an I/O device compatibility perspective. In this guide we only explicitly list ESX compatibility information. If a product is listed as supported for ESX, the product is also supported in corresponding versions of ESXi Embedded and ESXi Installable. If you are having a technical issue with 3rd party HW/SW and it is not found on this list, please refer to our 3rd Party HW/SW support policy at I/O Device and Model Information.

THIS CONTENT IS PROVIDED AS IS, AND TO THE MAXIMUM EXTENT PERMITTED BY APPLICABLE LAW, VMWARE DISCLAIMS ALL OTHER REPRESENTATIONS AND WARRANTIES, EXPRESS OR IMPLIED, REGARDING THIS CONTENT, INCLUDING THEIR FITNESS FOR A PARTICULAR PURPOSE, THEIR MERCHANTABILITY, OR THEIR NONINFRINGEMENT. VMWARE SHALL NOT BE LIABLE FOR ANY DAMAGES ARISING OUT OF OR IN CONNECTION WITH THE USE OF THIS CONTENT, INCLUDING DIRECT, INDIRECT, CONSEQUENTIAL DAMAGES, LOSS OF BUSINESS PROFITS OR SPECIAL DAMAGES, EVEN IF VMWARE HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES. THE VMWARE COMPATIBILITY GUIDE IS UPDATED FROM TIME TO TIME WITHOUT NOTICE. FOR THE LATEST VMWARE COMPATIBILITY GUIDE, PLEASE GO TO THE FOLLOWING LINK.

10 feet super stomper download adobe reader. There is compatibility going beyond VMWare's HCL - a ' has been assembled by the community listing a lot of components which ESX(i) has been found to work with. It also has something to say about software RAID controllers: this list includes a number of SATA controllers that provide RAID functionily via a software component in the drivers supplied with the controller.

Examples would be the Intel ICH series and the nVidia MCP series. ESX 4.x and ESXi 4.x do not support that software RAID functionality thus you will only be able to access the individual drives connected to controllers such as these.